Multi-Container workloads

Overview

Container workload is a computing resource - it runs your code. Unlike functions and batch jobs, container workloads run continuously and scale based on the CPU and RAM usage.

Container workload can be composed of 1 or multiple running containers.

Similarly to functions and batch jobs, container workloads are serverless and fully managed. This means you don't have to worry about administration tasks such as provisioning and managing servers.

The container image can be supplied in 3 different ways:

- built automatically from your source code by Stacktape

- built using a supplied Dockerfile by Stacktape

- pre-built images

Container workloads are running securely within a Virtual Private Cloud (VPC). You can expose ports of your containers by routing traffic from HTTP API Gateways and Load balancers using event integrations.

Under the hood

Under the hood Stacktape uses AWS ECS(Elastic Container Service) to orchestrate your containers. Containers can be ran using Fargate or EC2 instances:

- Fargate - technology which allows you to run containers without having to manage servers or clusters. This means you don't have to worry about administration tasks such as scaling, VM security, OS security & much more.

- EC2 instances - VMs running on AWS

ECS Services are self healing - if your container instance dies for any reason, it is automatically replaced with a healthy instance. They auto-scale from the box(within configured boundaries) leveraging the AWS Application Auto Scaling service.

When to use

If you are unsure which resource type is best suitable for your app, following table provides short comparison of all container-based resource types offered by Stacktape.

| Resource type | Description | Use-cases |

|---|---|---|

| web-service | continuously running container with public endpoint and URL | public APIs, websites |

| private-service | continuously running container with private endpoint | private APIs, services |

| worker-service | continuously running container not accessible from outside | continuous processing |

| multi-container-workload | custom multi container workload - you can customize accessibility for each container | more complex use-cases requiring customization |

| batch-job | simple container job - container is destroyed after job is done | one-off/scheduled processing jobs |

Advantages

- Control over underlying environment - Container workloads can run any Docker image or image built using your own Dockerfile.

- Price for workloads with predictable load - Compared to functions, container workloads are cheaper if your workload has a predictable load.

- Load-balanced and auto-scalable - Container workloads can automatically horizontally scale based on the CPU and Memory utilization.

- High availability - Container workloads run in multiple Availability Zones.

- Secure by default - Underlying environment is securely managed by AWS.

Disadvantages

Scaling speed - Unlike lambda functions that scale almost instantly, container workloads require more time - from several seconds to few minutes to add another container.

Not fully serverless - While container workloads can automatically scale up and down, they can't scale to 0. This means, if your workload is unused, you are still paying for at least one instance (minimum ~$8/month)

Basic usage

Copy

import express from 'express';const app = express();app.get('/', async (req, res) => {res.send({ message: 'Hello' });});app.listen(process.env.PORT, () => {console.info(`Server running on port ${process.env.PORT}`);});

Example server container written in Typescript

Copy

resources:mainGateway:type: http-api-gatewayapiServer:type: multi-container-workloadproperties:resources:cpu: 2memory: 2048scaling:minInstances: 1maxInstances: 5containers:- name: api-containerpackaging:type: stacktape-image-buildpackproperties:entryfilePath: src/main.tsenvironment:- name: PORTvalue: 3000events:- type: http-api-gatewayproperties:method: '*'path: /{proxy+}containerPort: 3000httpApiGatewayName: mainGateway

Container connected to HTTP API Gateway

Containers

Every container workload consists of 1 or multiple containers.

You can configure the following properties of your container:

Image

- Docker container is a running instance of a Docker image.

- The image for your container can be supplied in 4 different ways:

- images built using stacktape-image-buildpack

- images built using external-buildpack

- images built from the custom-dockerfile

- prebuilt-images

Environment variables

Most commonly used types of environment variables:

- Static - string, number or boolean (will be stringified).

- Result of a custom directive.

- Referenced property of another resource (using $ResourceParam directive). To learn more, refer to referencing parameters guide. If you are using environment variables to inject information about resources into your script, see also property connectTo which simplifies this process.

- Value of a secret (using $Secret directive).

Copy

environment:- name: STATIC_ENV_VARvalue: my-env-var- name: DYNAMICALLY_SET_ENV_VARvalue: $MyCustomDirective('input-for-my-directive')- name: DB_HOSTvalue: $ResourceParam('myDatabase', 'host')- name: DB_PASSWORDvalue: $Secret('dbSecret.password')

Dependencies between containers

Containers in a workload often rely on each other. In many cases, one needs to be started or successfully finish its execution before the other container can start.

Example: frontend container won't start until the backend container has successfully started.

Copy

resources:myApiGateway:type: http-api-gatewaymyMultiContainerWorkload:type: multi-container-workloadproperties:containers:- name: frontend-containerpackaging:type: stacktape-image-buildpackproperties:entryfilePath: src/client/index.tsdependsOn:- containerName: backendcondition: STARTenvironment:- name: PORTvalue: 80- name: API_COINTAINER_PORTvalue: 3000events:- type: http-api-gatewayproperties:httpApiGatewayName: myApiGatewaycontainerPort: 80path: '*'method: '*'- name: api-containerpackaging:type: stacktape-image-buildpackproperties:entryfilePath: src/server/index.tsenvironment:- name: PORTvalue: 3000events:- type: workload-internalproperties:containerPort: 3000resources:cpu: 2memory: 2048

Healthcheck

The purpose of the container health check is to monitor the health of the container from the inside.

Once an essential container of an instance is determined UNHEALTHY, the instance is automatically replaced with a new one.

- Example: A shell command sends a

curlrequest every 20 seconds to determine if the service is available. If the request fails (or doesn't return in 5 seconds), the command returns with non-zero exit code, and the healthcheck is considered failed.

Copy

resources:myContainerWorkload:type: multi-container-workloadproperties:containers:- name: api-containerpackaging:type: stacktape-image-buildpackproperties:entryfilePath: src/index.tsinternalHealthCheck:healthCheckCommand: ['CMD-SHELL', 'curl -f http://localhost/ || exit 1']intervalSeconds: 20timeoutSeconds: 5startPeriodSeconds: 150retries: 2resources:cpu: 2memory: 2048

Shutdown

- When a running container workload instance is deregistered (removed), all running containers receive a

SIGTERMsignal. - By default, you then have 2 seconds to clean up. After 2 seconds, your process receives a

SIGKILLsignal. - You can set the timeout by using

stopTimeoutproperty (must be between 2 - 120 seconds). - Setting stop timeout can help you give container the time to "finish the job" or to "cleanup" when deploying new version of container or when deleting the service.

Copy

process.on('SIGTERM', () => {console.info('Received SIGTERM signal. Cleaning up and exiting process...');// Finish any outstanding requests, or close a database connection...process.exit(0);});

Cleaning up before container shutdown.

Logging

- Every time your code outputs (prints) something to the

stdoutorstderr, your log will be captured and stored in a AWS CloudWatch log group. - You can browse your logs in 2 ways:

- Browse logs in the AWS CloudWatch console. To get direct link to your logs you have 2 options:

- Go to stacktape console. Link is among information about your stack and resource.

- You can use

stacktape stack-infocommand.

- Browse logs using stacktape logs command that will print logs to the console.

- Browse logs in the AWS CloudWatch console. To get direct link to your logs you have 2 options:

- Please note that storing log data can become costly over time. To avoid excessive charges, you can configure

retentionDays.

Forwarding logs

It is possible to forward logs to the third party services/databases. See page Forwarding logs for more information and examples.

Events

- Events are used to route events (requests) from the configured integration to the specified port of your container.

- Stacktape supports 3 different event sources (integrations):

HTTP API event

Forwards requests from the specified HTTP API Gateway.

- Example: all incoming

GETrequests to myApiGateway with path/my-pathwill be routed to the port80of the api-container in myApp workload.

Copy

resources:myApiGateway:type: http-api-gatewaymyApp:type: multi-container-workloadproperties:containers:- name: api-containerpackaging:type: stacktape-image-buildpackproperties:entryfilePath: src/index.tsevents:- type: http-api-gatewayproperties:httpApiGatewayName: myApiGatewaycontainerPort: 80path: '/my-path'method: GETresources:cpu: 2memory: 2048

- When the multi-container-workload scales (i.e. there is more than one instance of workload), http-api-gateway distributes incoming requests to the instances randomly.

Application Load Balancer event

Forwards requests from the specified Application load balancer. Application load balancer integration allows you to filter and forward incoming requests based on any part of request including path, query params, headers and others.

Copy

resources:myLoadBalancer:type: application-load-balancermyApp:type: multi-container-workloadproperties:containers:- name: api-containerpackaging:type: stacktape-image-buildpackproperties:entryfilePath: src/index.tsevents:- type: application-load-balancerproperties:loadBalancerName: myLoadBalancercontainerPort: 80priority: 1paths: ['*']resources:cpu: 2memory: 2048

All incoming requests will be routed to the port 80 of the api-container in myApp workload.

- When the multi-container-workload scales (i.e. there is more than one instance of workload), application-load-balancer distributes incoming requests to the instances in a round robin fashion.

Internal port (workload-internal)

Opens the specified port of the container for communication with other containers of this workload.

- Example: backend container exposes port

3000, which is reachable from the frontend container, but not from the internet. Frontend container exposes its port80to the internet through the HTTP Api Gateway.

Copy

resources:myApiGateway:type: http-api-gatewaymyApp:type: multi-container-workloadproperties:containers:- name: frontendpackaging:type: stacktape-image-buildpackproperties:entryfilePath: src/frontend/index.tsdependsOn:- containerName: backendcondition: STARTenvironment:- name: PORTvalue: 80- name: BACKEND_PORTvalue: 3000events:- type: http-api-gatewayproperties:httpApiGatewayName: myApiGatewaycontainerPort: 80path: /my-pathmethod: GET- name: backendpackaging:type: stacktape-image-buildpackproperties:entryfilePath: src/backend/index.tsenvironment:- name: PORTvalue: 3000events:- type: workload-internalproperties:containerPort: 3000resources:cpu: 2memory: 2048

Private port (service-connect)

Opens the specified port of the container to other workloads of stack (web-services, multi-container-workloads, private-services).

- Combination of alias and container port creates a unique identifier. You can then reach compute resource using URL in form

protocol://alias:containerPortfor examplehttp://my-service:8080orgrpc://appserver:8080 - By default, alias is derived from the name of your resource and container i.e

resourceName-containerName

- Example:

internalServiceworkload is open for connections frompublicServiceon addressinternalservice-api:3000

Copy

resources:internalService:type: multi-container-workloadproperties:containers:- name: apipackaging:type: stacktape-image-buildpackproperties:entryfilePath: src/private/index.tsevents:- type: service-connectproperties:containerPort: 3000resources:cpu: 2memory: 2048publicService:type: multi-container-workloadproperties:containers:- name: apipackaging:type: stacktape-image-buildpackproperties:entryfilePath: src/public/index.tsresources:cpu: 2memory: 2048

Resources

In resources section, you specify amounts of cpu/memory and EC2 instance types available to your workload.

There are two ways to run your containers:

using Fargate

- Fargate is a serverless, pay-as-you-go compute engine for running containers without having to manage underlying instances (servers).

- With Fargate you only need to specify

cpuandmemoryrequired for your workload. - While slightly more expensive, using Fargate is often preferred in strictly regulated industries since running containers on Fargate meets the standards for PCI DSS Level 1, ISO 9001, ISO 27001, ISO 27017, ISO 27018, SOC 1, SOC 2, SOC 3 out of the box.

using EC2 instances

- EC2 instances are virtual machines (VMs) ran on AWS cloud.

- Containers are placed on the EC2 instances which are added and removed depending on workload needs.

- You can choose instance type that suit your resource requirements the best.

Whether you chose to use Fargate or EC2 instances, your containers are running securely within your VPC.

Following applies when configuring resources section of your workload:

When specifing resources there are two underlying compute engines to use:

- Fargate - abstracts the server and cluster management away from the user, allowing them to run containers without managing the underlying servers, simplifying deployment and management of applications but offering less control over the computing environment.

- EC2 (Elastic Compute Cloud) - provides granular control over the underlying servers (instances).

By choosing

instanceTypesyou get complete control over the computing environment and the ability to optimize for specific workloads.

To use Fargate: Do NOT specify

instanceTypesand specifycpuandmemoryproperties.To use EC2 instances: specify

instanceTypes.

If your container workload has multiple containers, the assigned resources are shared between them.

Using Fargate

If you do not specify instanceTypes property in resources section, Fargate is used to run your containers.

Copy

resources:myContainerWorkload:type: multi-container-workloadproperties:containers:- name: api-containerpackaging:type: stacktape-image-buildpackproperties:entryfilePath: src/index.tsresources:cpu: 0.25memory: 512

Example using Fargate

Using EC2 instances

If you specify instanceTypes property in resources section, EC2 instances are used to run your containers.

- EC2 instances are automatically added or removed to meet the scaling needs of your compute resource(see also

scalingproperty). - When using

instanceTypes, we recommend to specify only one instance type and to NOT setcpuormemoryproperties. By doing so, Stacktape will set the cpu and memory to fit the instance precisely - resulting in the optimal resource utilization. - Stacktape leverages ECS Managed Scaling with target utilization 100%. This means that there are no unused EC2 instances(unused = not running your workload/service) running. Unused EC2 instances are terminated.

- Ordering in

instanceTypeslist matters. Instance types which are higher on the list are preferred over the instance types which are lower on the list. Only when instance type higher on the list is not available, next instance type on the list will be used. - For exhaustive list of available EC2 instance types refer to AWS docs.

To ensure that your containers are running on patched and up-to-date EC2 instances, your compute resource gets automatically re-deployed once a week(Sunday 03:00 UTC). Your compute resource stays available throughout this process.

Copy

resources:myContainerWorkload:type: multi-container-workloadproperties:containers:- name: api-containerpackaging:type: stacktape-image-buildpackproperties:entryfilePath: src/index.tsresources:instanceTypes:- c5.large

Example using EC2 instances

Placing containers on EC2

Stacktape aims to achieve 100% utilization of your EC2 instances. However this is not always possible and following behavior can be expected:

If you only specify one type in

instanceTypesand you do NOT setmemoryandcputhen Stacktape setsmemoryandcputo fit the EC2 instance type precisely(see also Default cpu and memory section).This means that if your workload scales(new instance of workload is added), new EC2 instance is added as well.

If you specify

cpuandmemoryproperties alongsideinstanceTypes, they will be respected. If the EC2 instance is larger (has more resources) than specifiedcpuandmemory, AWS places instances of your workload on available EC2 instances using binpack strategy. This strategy aims to put as many instances of your workload on the available EC2 instances as possible(to maximize utilization).

Default cpu and memory

If you do not specify

cputhen entire capacity of EC2 instance CPU is shared between containers running on the EC2 instance.If you do not specify

memorythenmemoryis set to a maximum possible value so that all EC2 instance types listed ininstanceTypesare able provide that amount of memory.In other words: Stacktape sets the memory so that the smallest instance type in

instanceTypes(in terms of memory) is able to provide that amount of memory.

Scaling

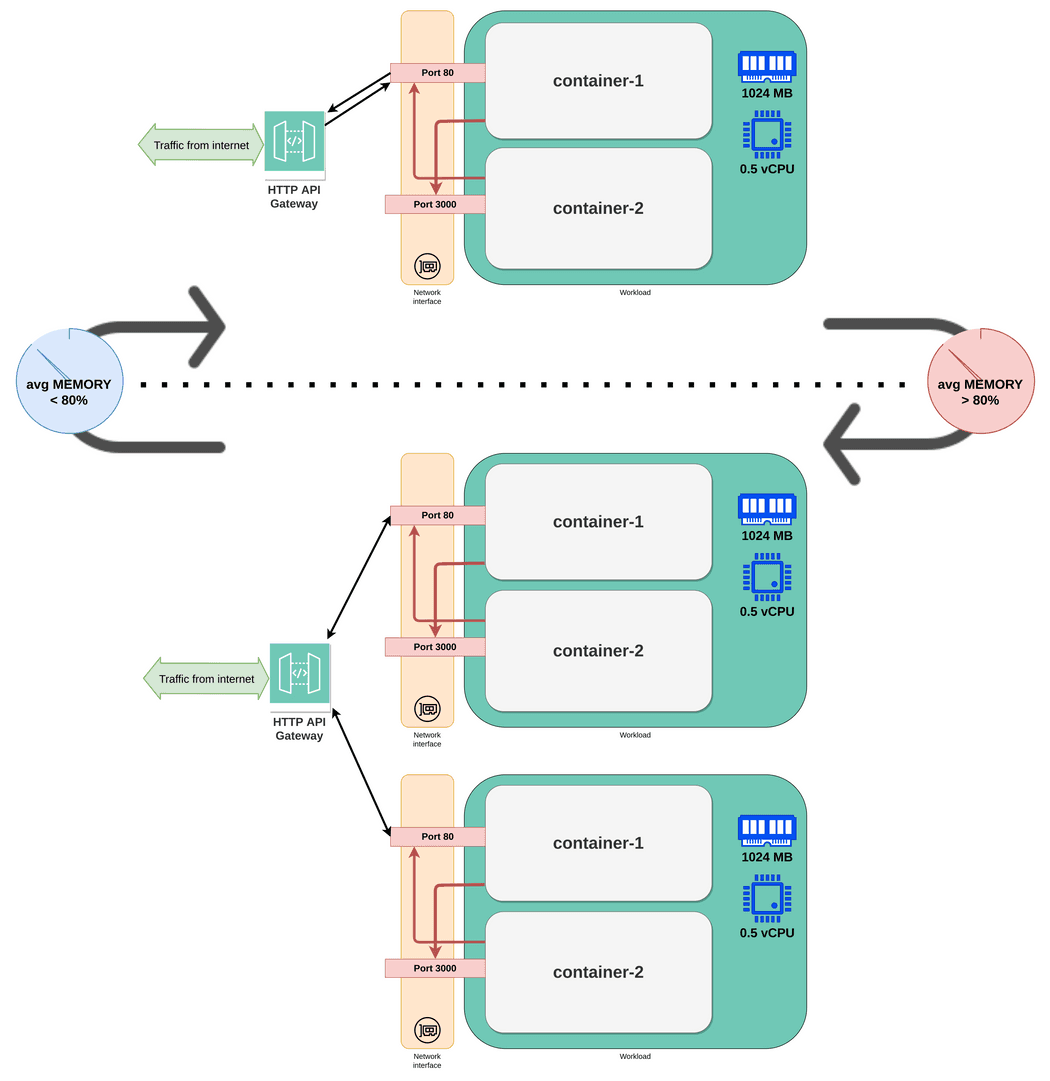

In scaling section, you can configure scaling behavior of your container workload. You can configure:

- Minimum and maximum amount of concurrently running instances of your workload.

- Conditions which trigger the scaling (up or down) using a scaling policy.

Scaling policy

A scaling policy specifies CPU and memory metric thresholds which trigger the scaling process.

Depending on the thresholds, the workload can either scale out (add instances) or scale in (remove instances).

If both

keepAvgCpuUtilizationUnderandkeepAvgMemoryUtilizationUnderare used, the workload will scale-out if one of the metrics is above the target value. However, to scale in, both of these metrics need to be below their respective target values.Scaling policy is more aggressive in adding capacity then removing capacity. For example, if the policy's specified metric reaches its target value, the policy assumes that your application is already heavily loaded. So it responds by adding capacity proportional to the metric value as fast as it can. The higher the metric, the more capacity is added.

When the metric falls below the target value, the policy expects that utilization will eventually increase again. Therefore it slows down the scale-in process by removing capacity only when utilization passes a threshold that is far enough below the target value (usually 20% lower).

Copy

resources:myContainerWorkload:type: multi-container-workloadproperties:containers:- name: container-1packaging:type: stacktape-image-buildpackproperties:entryfilePath: src/cont1/index.tsevents:- type: http-api-gatewayproperties:httpApiGatewayName: myApiGatewaycontainerPort: 80method: '*'path: '*'- name: container-2packaging:type: stacktape-image-buildpackproperties:entryfilePath: src/cont1/index.tsevents:- type: workload-internalproperties:containerPort: 3000resources:cpu: 0.5memory: 1024scaling:minInstances: 1maxInstances: 5scalingPolicy:keepAvgMemoryUtilizationUnder: 80keepAvgCpuUtilizationUnder: 80

Example usage of scaling configuration

Storage

- Each container workload instance has access to its own ephemeral storage. It's removed after the container workload instances is removed.

- It has a fixed size of 20GB.

- This storage is shared between all containers running in the container workload instance. However, if you have 2 concurrently running container workload instances, they do not share this storage.

- To store data persistently, consider using Buckets.

Accessing other resources

For most of the AWS resources, resource-to-resource communication is not allowed by default. This helps to enforce security and resource isolation. Access must be explicitly granted using IAM (Identity and Access Management) permissions.

Access control of Relational Databases is not managed by IAM. These resources are not "cloud-native" by design and have their own access control mechanism (connection string with username and password). They are accessible by default, and you don't need to grant any extra IAM permissions. You can further restrict the access to your relational databases by configuring their access control mode.

Stacktape automatically handles IAM permissions for the underlying AWS services that it creates (i.e. granting container workloads permission to write logs to Cloudwatch, allowing container workloads to communicate with their event source and many others).

If your workload needs to communicate with other infrastructure components, you need to add permissions manually. You can do this in 2 ways:

Using connectTo

- List of resource names or AWS services that this container workload will be able to access (basic IAM permissions will be granted automatically). Granted permissions differ based on the resource.

- Works only for resources managed by Stacktape in

resourcessection (not arbitrary Cloudformation resources) - This is useful if you don't want to deal with IAM permissions yourself. Handling permissions using raw IAM role

statements can be cumbersome, time-consuming and error-prone. Moreover, when using

connectToproperty, Stacktape automatically injects information about resource you are connecting to as environment variables into your workload.

Copy

resources:photosBucket:type: bucketmyContainerWorkload:type: multi-container-workloadproperties:containers:- name: apiContainerpackaging:type: stacktape-image-buildpackproperties:entryfilePath: sr/index.tsconnectTo:# access to the bucket- photosBucket# access to AWS SES- aws:sesresources:cpu: 0.25memory: 512

By referencing resources (or services) in connectTo list, Stacktape automatically:

- configures correct compute resource's IAM role permissions if needed

- sets up correct security group rules to allow access if needed

- injects relevant environment variables containing information about resource you are connecting to into the compute resource's runtime

- names of environment variables use upper-snake-case and are in form

STP_[RESOURCE_NAME]_[VARIABLE_NAME], - examples:

STP_MY_DATABASE_CONNECTION_STRINGorSTP_MY_EVENT_BUS_ARN, - list of injected variables for each resource type can be seen below.

- names of environment variables use upper-snake-case and are in form

Granted permissions and injected environment variables are different depending on resource type:

Bucket

- Permissions:

- list objects in a bucket

- create / get / delete / tag object in a bucket

- Injected env variables:

NAME,ARN

DynamoDB table

- Permissions:

- get / put / update / delete item in a table

- scan / query a table

- describe table stream

- Injected env variables:

NAME,ARN,STREAM_ARN

MongoDB Atlas cluster

- Permissions:

- Allows connection to a cluster with

accessibilityModeset toscoping-workloads-in-vpc. To learn more about MongoDB Atlas clusters accessibility modes, refer to MongoDB Atlas cluster docs. - Creates access "user" associated with compute resource's role to allow for secure credential-less access to the the cluster

- Allows connection to a cluster with

- Injected env variables:

CONNECTION_STRING

Relational(SQL) database

- Permissions:

- Allows connection to a relational database with

accessibilityModeset toscoping-workloads-in-vpc. To learn more about relational database accessibility modes, refer to Relational databases docs.

- Allows connection to a relational database with

- Injected env variables:

CONNECTION_STRING,JDBC_CONNECTION_STRING,HOST,PORT(in case of aurora multi instance cluster additionally:READER_CONNECTION_STRING,READER_JDBC_CONNECTION_STRING,READER_HOST)

Redis cluster

- Permissions:

- Allows connection to a redis cluster with

accessibilityModeset toscoping-workloads-in-vpc. To learn more about redis cluster accessibility modes, refer to Redis clusters docs.

- Allows connection to a redis cluster with

- Injected env variables:

HOST,READER_HOST,PORT

Event bus

- Permissions:

- publish events to the specified Event bus

- Injected env variables:

ARN

Function

- Permissions:

- invoke the specified function

- invoke the specified function via url (if lambda has URL enabled)

- Injected env variables:

ARN

Batch job

- Permissions:

- submit batch-job instance into batch-job queue

- list submitted job instances in a batch-job queue

- describe / terminate a batch-job instance

- list executions of state machine which executes the batch-job according to its strategy

- start / terminate execution of a state machine which executes the batch-job according to its strategy

- Injected env variables:

JOB_DEFINITION_ARN,STATE_MACHINE_ARN

User auth pool

- Permissions:

- full control over the user pool (

cognito-idp:*) - for more information about allowed methods refer to AWS docs

- full control over the user pool (

- Injected env variables:

ID,CLIENT_ID,ARN

SNS Topic

- Permissions:

- confirm/list subscriptions of the topic

- publish/subscribe to the topic

- unsubscribe from the topic

- Injected env variables:

ARN,NAME

SQS Queue

- Permissions:

- send/receive/delete message

- change visibility of message

- purge queue

- Injected env variables:

ARN,NAME,URL

Upstash Kafka topic

- Injected env variables:

TOPIC_NAME,TOPIC_ID,USERNAME,PASSWORD,TCP_ENDPOINT,REST_URL

Upstash Redis

- Injected env variables:

HOST,PORT,PASSWORD,REST_TOKEN,REST_URL,REDIS_URL

Private service

- Injected env variables:

ADDRESS

aws:ses(Macro)

- Permissions:

- gives full permissions to aws ses (

ses:*). - for more information about allowed methods refer to AWS docs

- gives full permissions to aws ses (

Using iamRoleStatements

- List of raw IAM role statement objects. These will be appended to the container workload's role.

- Allows you to set granular control over your container workload's permissions.

- Can be used to give access to any Cloudformation resource

Copy

resources:myContainerWorkload:type: multi-container-workloadproperties:containers:- name: apiContainerpackaging:type: stacktape-image-buildpackproperties:entryfilePath: server/index.tsiamRoleStatements:- Resource:- $CfResourceParam('NotificationTopic', 'Arn')Effect: 'Allow'Action:- 'sns:Publish'resources:cpu: 2memory: 2048cloudformationResources:NotificationTopic:Type: 'AWS::SNS::Topic'

Deployment strategies

Using deployment strategies you configure the way your multi-container-workload is updated when when deploying new

version. By default,

rolling update is used. However

you can use deployment property to choose different strategy.

- Using

deploymentyou can update the container workload in live environment in a safe way - by shifting the traffic to the new version gradually. - Gradual shift of traffic gives you opportunity to test/monitor the workload during update and in a case of a problem quickly rollback.

- Deployment supports multiple strategies:

- Canary10Percent5Minutes - Shifts 10 percent of traffic in the first increment. The remaining 90 percent is deployed five minutes later.

- Canary10Percent15Minutes - Shifts 10 percent of traffic in the first increment. The remaining 90 percent is deployed 15 minutes later.

- Linear10PercentEvery1Minute - Shifts 10 percent of traffic every minute until all traffic is shifted.

- Linear10PercentEvery3Minutes - Shifts 10 percent of traffic every three minutes until all traffic is shifted.

- AllAtOnce - Shifts all traffic to the updated container workload at once.

- You can validate/abort deployment(update) using lambda-function hooks.

When using deployment, your container workload must use application-load-balancer event integration

Copy

resources:myLoadBalancer:type: application-load-balancermyApp:type: multi-container-workloadproperties:containers:- name: api-containerpackaging:type: stacktape-image-buildpackproperties:entryfilePath: src/index.tsevents:- type: application-load-balancerproperties:loadBalancerName: myLoadBalancercontainerPort: 80priority: 1paths: ['*']resources:cpu: 2memory: 2048deployment:strategy: Canary10Percent5Minutes

Hook functions

You can use hooks to perform checks using lambda-functions.

Copy

resources:myLoadBalancer:type: application-load-balancermyApp:type: multi-container-workloadproperties:containers:- name: api-containerpackaging:type: stacktape-image-buildpackproperties:entryfilePath: src/index.tsevents:- type: application-load-balancerproperties:loadBalancerName: myLoadBalancercontainerPort: 80priority: 1paths: ['*']resources:cpu: 2memory: 2048deployment:strategy: Canary10Percent5MinutesafterTrafficShiftFunction: validateDeploymentvalidateDeployment:type: functionproperties:packaging:type: stacktape-lambda-buildpackproperties:entryfilePath: src/validate-deployment.ts

Copy

import { CodeDeployClient, PutLifecycleEventHookExecutionStatusCommand } from '@aws-sdk/client-codedeploy';const client = new CodeDeployClient({});export default async (event) => {// read DeploymentId and LifecycleEventHookExecutionId from payloadconst { DeploymentId, LifecycleEventHookExecutionId } = event;// performing validations hereawait client.send(new PutLifecycleEventHookExecutionStatusCommand({deploymentId: DeploymentId,lifecycleEventHookExecutionId: LifecycleEventHookExecutionId,status: 'Succeeded' // status can be 'Succeeded' or 'Failed'}));};

Code of validateDeployment function

Test traffic listener

When using beforeAllowTraffic hook you can use test listener on your application-load-balancer to send test

traffic to the new version of workload before allowing any production traffic.

If your application-load-balancer uses default listeners(as in the example below), test listener is automatically

created on port 8080.

Copy

resources:myLoadBalancer:type: application-load-balancermyApp:type: multi-container-workloadproperties:containers:- name: api-containerpackaging:type: stacktape-image-buildpackproperties:entryfilePath: src/index.tsevents:- type: application-load-balancerproperties:loadBalancerName: myLoadBalancercontainerPort: 80priority: 1paths: ['*']resources:cpu: 2memory: 2048deployment:strategy: Canary10Percent5MinutesbeforeAllowTrafficFunction: testDeploymenttestDeployment:type: functionproperties:packaging:type: stacktape-lambda-buildpackproperties:entryfilePath: src/test-deployment.tsenvironment:- name: LB_DOMAINvalue: $ResourceParam('myLoadBalancer', 'domain')- name: TEST_LISTENER_PORTvalue: 8080

Copy

import { CodeDeployClient, PutLifecycleEventHookExecutionStatusCommand } from '@aws-sdk/client-codedeploy';import fetch from 'node-fetch';const client = new CodeDeployClient({});export default async (event: { DeploymentId: string; LifecycleEventHookExecutionId: string }) => {const { DeploymentId: deploymentId, LifecycleEventHookExecutionId: lifecycleEventHookExecutionId } = event;try {// test new version by using test listener portawait fetch(`http://${process.env.LB_DOMAIN}:${process.env.TEST_LISTENER_PORT}`);// validate result// do some other tests ...} catch (err) {// send FAILED status if error occurredawait client.send(new PutLifecycleEventHookExecutionStatusCommand({deploymentId,lifecycleEventHookExecutionId,status: 'Failed'}));throw err;}// send SUCCEEDED status after successful testingawait client.send(new PutLifecycleEventHookExecutionStatusCommand({deploymentId,lifecycleEventHookExecutionId,status: 'Succeeded'}));};

Code of testDeployment function

If your application-load-balancer uses

custom listeners, you need to create additional

listener and specify testListenerPort in deployment section of the multi-container-workload.

Default VPC connection

- Certain AWS services (such as Relational Databases) must be connected to a

VPC (Virtual private cloud) to be able to run. For stacks that include these resources, Stacktape

does 2 things:

- creates a default VPC

- connects the VPC-requiring resources to the default VPC.

- Container workloads are connected to the default VPC of your stack by default. This means that container workloads can

communicate with resources that have their accessibility mode set to

vpcwithout any extra configuration. - To learn more about VPCs and accessibility modes, refer to VPC docs, accessing relational databases, accessing redis clusters and accessing MongoDb Atlas clusters.

Referenceable parameters

Currently, no parameters can be referenced.

Pricing

You are charged for:

Virtual CPU / hour:

- depending on the region $0.04048 - $0.0696

Memory GB / hour:

- depending on the region $0.004445 - $0.0076

The duration is rounded to 1 second with a 1 minute minimum. To learn more, refer to AWS Fargate pricing.